ARTICLE AD BOX

5 minutes ago

Shiona McCallumSenior Tech Reporter

Getty Images

Getty Images

More than 70 million warning messages have been sent to people attempting to access child sexual abuse material (CSAM) online over the past two years, the Lucy Faithfull Foundation says.

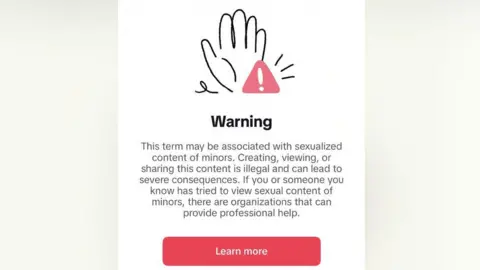

The messages are sent as part of Project Intercept, a partnership between the child protection charity and technology firms including Google, TikTok and Meta.

Rather than simply blocking content, the messages highlight the illegality of viewing CSAM and direct users to support services aimed at changing behaviour.

The foundation said nearly 700,000 people went on to access its Stop It Now resources, which offer confidential advice and self-help tools - a figure some experts say is disappointingly low.

"Given that 70 million warning messages have been sent, the fact that only 700,000 people click through to get support seems low. This is disappointing, given that the scale of the problem of child sexual abuse imagery online is growing fast," said Professor Sonia Livingstone, director of the Digital Futures for Children centre at London School of Economics,

"On the other hand, since four in five of those people who seek support do engage with the resources provided, that suggests the system is working for those who are really motivated to get help."

Lucy Faithfull Foundation

Lucy Faithfull Foundation

Project Intercept is active in 131 countries and operates across a range of online spaces.

These include end-to-end encrypted services - where only the sender and recipient can view what's sent - and AI chatbot platforms.

The foundation did not specify how many individual users were responsible for the searches.

But it said engagement with the support material had been high, with an average of 28,000 users a month redirected in 2024 and 2025.

More than four in five continued to interact with the content, although the organisation did not publish data on longer-term behaviour change.

Deborah Denis, chief executive of the Lucy Faithfull Foundation, said: "By placing warnings at the moment harmful behaviour is happening, we can disrupt it and divert people towards help to change," adding that the approach could be scaled even further.

Children's charity the NSPCC said that interventions of this kind could play an important role in disrupting harmful behaviour, but should form part of a wider set of measures aimed at stopping illegal material from being created and shared in the first place.

The child protection charity said tech companies needed to go further in tackling the spread of such content.

Emma Hardy, Communications Director at the Internet Watch Foundation, said "innovative solutions" needed to be looked at including on parts of the internet that are end-to-end encrypted.

"As it is, it is simply too easy to share and distribute child sexual abuse imagery online, and for children to become trapped in cycles of exploitation, she said.

"Safety by design needs to be a guiding principle and new products and platforms must be built to make sure there is nowhere for this sort of behaviour to hide."

Ofcom, the communications regulator, said warning messages formed part of its expectations under the UK's Online Safety Act.

Child Protection Policy Director Almudena Lara said the data highlighted both progress and "the scale of the problem that still needs to be addressed".

Tech firms involved said the approach complements existing moderation systems.

Griffin Hunt, a product manager at Google Search, said changes made in early 2025 had led to "greater engagement with therapeutic help services" and fewer follow-up searches for illegal material.

Mega - a company which sells encrypted cloud storage - is also involved in the project, which it said challenged the idea that encrypted services could not intervene early to address harmful behaviour.

3 weeks ago

51

3 weeks ago

51

English (US) ·

English (US) ·