ARTICLE AD BOX

By Jane Wakefield

Technology reporter

Image source, Getty Images

Image caption,Trips to virtual worlds are not always pleasant for women trying them out for the first time

The metaverse is still very much at concept stage but the latest attempts to create virtual worlds are already facing an age-old problem: harassment.

Bloomberg's technology journalist, Parmy Olson, told the BBC's Tech Tent programme about her own "creepy" experiences.

Another woman likened a traumatic experience in a virtual-reality world to sexual abuse.

Meta has now announced a new feature to address the problem.

- Listen to the Tech Tent podcast here, with more on Meta and other stories of the week.

"I did have some moments when it was awkward for me as a woman," Ms Olson said of her interactions in virtual reality (VR).

She was visiting Meta's Horizon Worlds, its virtual-reality platform where anyone 18 or older can create an avatar and hang out.

To do so, users need one of Meta's VR headsets, and the space offers the chance to play games and chat to other avatars, none of whom has legs.

"I could see straight away I was the only woman, the only female avatar. And I had these men kind of come around me and stare at me silently," Ms Olson told Tech Tent.

"Then they started taking pictures of me and giving the pictures to me and I had a moment when a guy zoomed up to me and said something.

"And in virtual reality, if someone is close to you, then the voice sounds like someone is literally talking into your ear. And it took me aback."

She experienced similar discomfort in Microsoft's social VR platform.

"I was talking to another lady and within minutes of us chatting a guy came up and started chatting to us and following us around saying inappropriate things and we had to block him," she said.

"I have since heard of other women who have had similar experiences."

She said while she wouldn't describe it as harassment, it was "creepy and awkward".

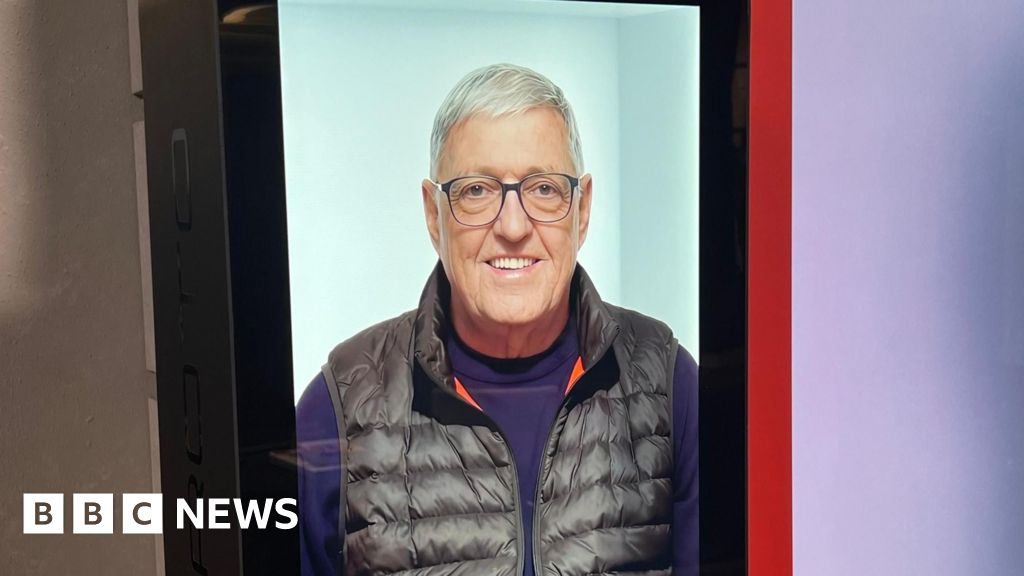

Watch: The BBC's technology correspondent Marc Cieslak enters the metaverse

Nina Jane Patel went a lot further this week when she told the Daily Mail that she was abused in Horizon Venues, likening it to sexual assault. She described how a group of male avatars "groped her" and subjected her to a stream of sexual innuendo. They photographed her and sent a message reading: "Don't pretend you didn't love it."

Meta responded to the paper saying that it was sorry. "We want everyone to have a positive experience and easily find the safety tools that can help in a situation like this - and help us investigate and take action."

It has since announced a new feature, Personal Boundary, which begins rolling out on 4 February. It prevents avatars from coming within a set distance of each other, cereating more personal space for people and making it easier to avoid these unwanted interactions.

It is being made available for Horizon Worlds and Horizon Venues.

Image source, Getty Images

Image caption,Meta's chief technology officer, Andrew Bosworth, says there may have to be a trade-off between privacy and safety in virtual spaces

Moderating content in the nascent metaverse is going to be challenging, and Meta's chief technology officer, Andrew Bosworth, admitted that it would offer both "greater opportunities and greater threats".

"It could feel a lot more real to me, if you were being abusive towards me because it feels a lot more like physical space," he said in an interview with the BBC late last year.

But he said people in virtual roles would have "a great deal more power" over their environments.

"If I were to mute you, you would cease to exist for me and your ability to do harm to me is immediately nullified."

And he questioned whether people would want the kind of moderation that exists on platforms such as Facebook when having chats in virtual reality.

"Do you really want the system or a person standing by listening in? Probably not"

"So I think we have a privacy trade-off - if you want to have a high degree of content, safety or what we would call integrity, well that trades off against privacy."

And in Meta's vision of the metaverse, where different rooms are run by different companies, the trade-off gets even more complex as people move out of the Meta-controlled virtual world into others.

"I can give no guarantees about either the privacy, nor the integrity of that conversation," he said.

Image source, Getty Images

Image caption,The rules in the Metaverse will be very different from those governing current online spaces

Ms Olson agreed that it was going to be "a very difficult thing for Facebook, Microsoft and others to take care of".

"When you are scanning text for hate speech, it's hard but doable - you can use machine-learning algorithms.

"To process visual information about an avatar or how close one is to another, that is going to be so expensive computationally, that is going to take up so much computer power, I don't know what technology can do that."

Facebook is investing $10bn in its metaverse plans and part of that will need to go on building new ways of moderating content.

"We have learned a tremendous amount in the last 15 years of online discourse... so we're going to bring all that knowledge with us to do the best that we can to build these things from the ground up, to give people a lot of control over their own experience," Mr Bosworth told the BBC.

Image source, Reuters

Image caption,Meta's legless avatars can have all sorts of experiences - private or communal - in the metaverse

Dr Beth Singler, an anthropologist at Cambridge University, who has studied the ethics of virtual worlds, said: "Facebook has already failed to learn about what is happening in online spaces. Yes, they have changed some of their policies but there is still material out there that shouldn't be."

There is more to learn from gaming, she thinks, where the likes of Second Life and World of Warcraft have offered virtual worlds for years, limiting who avatars can talk to and the names they can choose for them.

Meta's decision to use legless avatars may also be deliberate, she thinks - most likely a technical one about the lack of sensors for legs, but it could also be a way to limit "below the belt" issues that might arise from having a fully physical presence.

But having strict rules around what avatars can look like may bring its own problems for those "trying to express a certain identity".

4 years ago

128

4 years ago

128

English (US) ·

English (US) ·